As part of our ongoing translation quality research, we evaluated subtitle translation from English into Spanish, Japanese, Korean, Thai, and both Chinese variants — Simplified (zh-CN) and Traditional (zh-TW). We scored 1,002 subtitle segments using two industry-standard reference-free metrics: MetricX-24 (lower is better) and COMETKiwi (higher is better).

The top-line result: TranslateGemma-12b, a model specifically trained for translation, ranked #1 across all six language pairs. The second-place model was Gemini Flash Lite, which consistently beat full-weight Claude Sonnet and both GPT-5.4 variants. The separation came almost entirely from translation fidelity, not fluency.

However, human QA found something the metrics had missed entirely.

TranslateGemma ranked #1 in both Chinese variants. When our linguists reviewed the Traditional Chinese output, the model was outputting Simplified Chinese for both zh-CN and zh-TW language codes. We retested with the zh-Hant language code. The result: 76% of segments still came back in Simplified Chinese, 14% correctly Traditional, 10% ambiguous — with MetricX-24 and COMETKiwi showing identical high scores throughout and no indication of a problem.

A few other findings: Claude ranked last in Japanese (fluent but divergent from source meaning), DeepSeek dropped sharply for Thai, and Japanese was consistently the hardest language for all models.

Key Findings by Language

The overall ranking — TranslateGemma first, Gemini Flash Lite second — held across most language pairs. But the gaps between models varied enormously depending on the target language. Here is what stood out in each.

Spanish (ES)

Spanish was the easiest language for every model. All six achieved high COMETKiwi scores and low MetricX-24 errors, with relatively small gaps between first and last place. This is consistent with what we see in production: English-to-Spanish is the most mature translation direction, with the largest volume of training data across all AI providers. If you are evaluating AI translation and want a baseline, Spanish is a reasonable starting point — but it is not representative of harder language pairs.

Japanese (JA)

Japanese was the hardest language in the benchmark, and it was not close. Every model scored worse on Japanese than on any other language, and the quality gap between the best and worst model was the widest here. Claude Sonnet ranked last for Japanese — producing output that read fluently but frequently diverged from the source meaning. This kind of failure is particularly dangerous because it looks correct to someone who does not speak the source language. For subtitle localization into Japanese, human review is not optional; it is the only way to catch meaning shifts that automated metrics miss.

Korean (KO)

Korean performed similarly to Spanish in terms of overall quality — high scores across the board. TranslateGemma and Gemini Flash Lite separated themselves at the top, but even the lower-ranked models produced usable output. Korean has benefited from large-scale investment in training data by major AI labs, and it shows in the consistency of results here.

Thai (TH)

Thai was the second-hardest language after Japanese, and the one where model selection mattered most. DeepSeek dropped sharply for Thai, producing the worst scores of any model-language combination in the entire benchmark. TranslateGemma maintained its lead, but even the top model showed more variance segment-to-segment than it did for Spanish or Korean. Thai is a language where machine translation post-editing (MTPE) provides the best balance between speed and quality.

Chinese — Simplified (ZH-CN) and Traditional (ZH-TW)

Chinese is where the benchmark uncovered its most important finding. TranslateGemma ranked #1 in both Simplified and Traditional Chinese by a comfortable margin on automated metrics. But when our linguists reviewed the Traditional Chinese output, they discovered the model was producing Simplified Chinese characters for both language codes.

We re-ran the test using the zh-Hant language tag instead of zh-TW. The result: 76% of segments still came back in Simplified Chinese, 14% were correctly Traditional, and 10% were ambiguous characters shared between both scripts. MetricX-24 and COMETKiwi scored all of these segments identically — they had no mechanism to detect the wrong script.

This matters because Simplified and Traditional Chinese are not interchangeable. Content distributed in Taiwan, Hong Kong, or Macau with Simplified characters signals either carelessness or lack of localization entirely. For any project involving Chinese variants, AI quality control that checks script compliance is essential.

Why Automated Metrics Are Not Enough

This benchmark was designed to use industry-standard automated metrics — MetricX-24 and COMETKiwi — and we believe they are the best reference-free metrics available today. Both are built on large language models (mT5-XXL and XLM-RoBERTa-XXL respectively), both predict human quality judgments with strong correlation, and both operate without needing a reference translation.

But they have structural blind spots that this benchmark exposed clearly.

Script detection. Neither metric can distinguish between Simplified and Traditional Chinese characters. A translation in the wrong script scores identically to a correct one. This is not a minor edge case — it affects every project targeting Chinese-speaking markets where the wrong variant appears.

Source fidelity vs. fluency. COMETKiwi weights fluency heavily, which means a translation that reads naturally in the target language can score well even if it diverges from what the source actually says. Claude's Japanese output was a textbook example: fluent, natural, and frequently wrong in meaning. MetricX-24 catches some of this through its adequacy component, but not reliably at the segment level.

Formatting and conventions. Subtitle translation has constraints that general translation does not. Line lengths, reading speed, timing cues, and cultural conventions for onscreen text all affect quality. None of these are captured by either metric. A subtitle that is technically accurate but too long to read at the timecoded display speed is a failed translation in practice.

Automated scores gave TranslateGemma a perfect ranking for Traditional Chinese. Every single segment was in the wrong script. That is the gap between metrics and reality.

The takeaway is not that automated metrics are useless — they are extremely valuable for initial screening, model comparison, and regression testing. But they should never be the only quality gate. For production subtitle localization, the combination we use internally — automated scoring followed by human linguistic review — catches the failures that metrics alone cannot.

Choosing an AI Model for Subtitle Translation

If you are evaluating AI models for subtitle or video localization, here is what this benchmark suggests.

For European languages (Spanish, Portuguese, French, German): Most modern models perform well. The gaps are small, and you can optimize for cost or speed without sacrificing much quality. Gemini Flash Lite offered the best quality-to-cost ratio in our tests.

For CJK languages (Chinese, Japanese, Korean): Model selection matters significantly. TranslateGemma led in Korean and Chinese (with the script caveat), but Gemini Flash Lite was more reliable overall because it did not produce wrong-script output. For Japanese specifically, every model struggled — plan for human post-editing regardless of which model you choose.

For languages with less training data (Thai, Vietnamese, Indonesian): Expect wider variance between models and more segment-level failures. DeepSeek's sharp drop for Thai is a cautionary example. Test on your actual content before committing to a model, and budget for MTPE review.

For any language with script variants: Automated metrics will not catch script-type errors. If your project involves Traditional vs. Simplified Chinese, Cyrillic vs. Latin Serbian, or any similar distinction, you need explicit script validation as part of your QA pipeline.

Regardless of model or language, we recommend a three-stage workflow for production subtitles: AI translation for the initial draft, automated metric scoring to flag outlier segments, and human linguistic review for final approval. This is the approach we use for our own audio and video localization projects — it catches what each stage alone would miss.

How We Measured Translation Quality

We tested six AI translation models on a single English-language subtitle file containing 1,002 segments. Each model translated the file into six target languages: Spanish, Japanese, Korean, Thai, Chinese Simplified, and Chinese Traditional. All models received the same source file, the same system prompt, and the same language-specific instructions.

Models Tested

- TranslateGemma-12b — Google's translation-specific model built on the Gemma architecture, fine-tuned exclusively for translation tasks

- Gemini Flash Lite — Google's lightweight general-purpose model, optimized for speed and cost

- Claude Sonnet — Anthropic's mid-tier model with strong multilingual capabilities

- GPT-5.4 — OpenAI's latest full-weight model

- GPT-5.4 Mini — OpenAI's smaller, faster variant

- DeepSeek — DeepSeek's large language model with competitive multilingual performance

Metrics

MetricX-24 is Google's learned evaluation metric, based on mT5-XXL with approximately 13 billion parameters. It operates in Quality Estimation mode — scoring translation quality using only the source text and the translation, with no reference translation required. The scale runs from 0 to 25, where lower scores indicate better quality.

COMETKiwi is Unbabel's reference-free quality estimation metric, based on XLM-RoBERTa-XXL with approximately 10.7 billion parameters. It predicts human Direct Assessment scores and returns a value between 0 and 1, where higher is better.

We combined both metrics into a single ranking score — the Translation Quality Index (TQI):

TQI = COMETKiwi × exp(−MetricX / 10)

The exponential decay term converts MetricX (where lower is better) into a multiplicative penalty. A MetricX score of 0 applies no penalty. A score of 2.5 (typical of good translations) reduces the TQI by about 22%. A score of 5 reduces it by 39%. This rewards models that perform well on both fluency and fidelity simultaneously.

What This Benchmark Does Not Cover

This benchmark measures translation quality only. It does not evaluate cost per segment, API latency, rate limits, context window handling, or integration complexity — all of which matter for production deployments. It also uses a single source file in a single domain (subtitles); results may differ for other content types like marketing copy, legal documents, or UI strings. For a broader view of how AI is reshaping the future of translation, see our analysis of what 20 years in localization has taught us about AI's role.

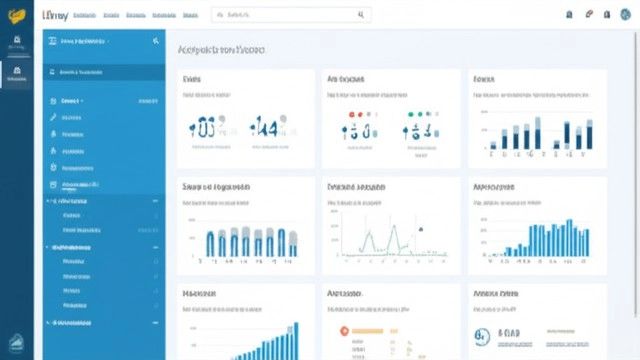

Benchmark Results

Switch languages with the top tabs. Use the section tabs to view rankings, cross-language comparisons, individual segments, timing data, and methodology.

Loading benchmark data…