That's the gap "just translate it" can't close. You can ship on time, pass QA, and still lose the market — because correct words can sound wrong, jokes land sideways, and some content runs into what your translators are willing to touch at all. Below are four patterns from twenty years of localization at Alconost, and what each one taught us about getting ahead of the failure instead of retrofitting trust after launch.

Trap 1: Right words, wrong register

Japanese encodes politeness, distance, and channel norms directly in grammar and vocabulary. There isn't one “marketing Japanese” the way there's one “marketing English” — the register you'd use for a teenage gamer is not the register you'd use for a B2B buyer, and there are stops in between. If your brief doesn't tell the translator which one, they pick. And different translators pick differently. Your error states sound chatty, your onboarding sounds corporate, and the product reads “translated” instead of “built for me.”

In Japanese, there are three main tones of voice. If you're addressing a business reader rather than a teenage gamer, the tone has to be very polite. And if you don't specify the tone for the translator, all your texts end up in the translator's own voice — which is culturally sensitive.

The fix isn't a better translator — it's a better brief. One page per priority locale: who you're talking to, the channel each surface lives in (in-app vs email vs store listing), three headlines that sound on-brand, two that don't. Ship that alongside screenshots or design links so the translator can see whether they're writing a button, an empty state, or a marketing hero. Tone drift is almost always a context-vacuum problem. Fill the vacuum before strings hit the TMS, not after the QA pass.

Trap 2: Some content needs more than translators — it needs a sourcing plan

A few years back we took on a dating app aimed at a niche segment of users. Localizing into most languages was straightforward. Arabic stopped us. Translator after translator declined: those users may exist, they told us, but our religion does not allow us to translate this. Eventually we found translators in the Arabic-speaking diaspora — several based in Germany — who agreed to take it on. The work shipped, but only after we'd rebuilt the supply side of the project from scratch.

We translated a niche dating app aimed at a niche user segment. In Arabic it became impossible — many translators told us: those users may exist, but our religion does not allow us to translate this. Eventually some translators living in Germany agreed. So it is negotiable, but these cultural barriers are real.

This isn't a translation-quality problem. It's a coverage problem, and it shows up before the first string moves. Some categories — adult content, gambling, alcohol, certain political or religious topics, contested medical content — collide with translator norms in ways that no brief or style guide can resolve. The translator either takes the work or doesn't, and "doesn't" is a perfectly legitimate answer. If you discover that in week three of a launch sprint, you are stuck.

The fix is to treat translator availability as a scoping question, not a delivery question. Before the first PO goes out, ask your localization lead which target locales are likely to friction on your category, and what the realistic sourcing path looks like for each: in-country translators, diaspora translators, or — if neither materializes — a different go-to-market choice for that locale entirely. Sometimes the answer is "we delay Arabic by a quarter and pilot in Turkish first." Sometimes it's "we ship, but we rewrite the marketing site rather than translate it." The point is to make that call deliberately, with the localization team in the room, before the timeline assumes a smooth path that doesn't exist.

A related pattern worth flagging in the same scoping pass: some markets resist English borrowings in the UI altogether. French is the standard example — the language has invented native terms for computer concepts that most other languages just imported from English. If your product leans on English-derived jargon, your French localization isn't a translation job, it's a glossary job, and you want to know that before you quote the timeline, not after.

Trap 3: The line translates, the joke doesn't

The Queen joke from the opening wasn't a one-off — it's the shape of an entire category of failure. Lines that survive translation perfectly but lose their point on the way across. Humor, idioms, proper-noun references, sports analogies, cultural shorthand: all of it assumes the reader shares context with the writer, and translation doesn't carry the assumption along for the ride. What reads playful in one market reads careless, confusing, or — when the reference brushes against politics, monarchy, or national identity — actively hostile in another.

Games and growth marketing hit this constantly because they lean on voice for engagement. But the same dynamic kills onboarding microcopy, error messages with personality, and any campaign hook that pivoted on a pun.

We localized a game for years. The developers were Russian, the game targeted a global audience in American-flavored English, and it included jokes about the Queen. A US audience would read those lightly — but a UK proofreader pushed back hard: keep the joke if you want, but not the Queen. This happens all the time.

Look at what's actually stacked in that example: the source culture isn't the target culture, and neither one is the language of the strings. Russian team, American English, UK reader. Three different cultural frames on a single line of copy. That's the situation most global brands are genuinely in — which is why "is it grammatically correct" is the wrong test.

The fix is to sort surfaces by what's at stake. Error states and form labels are translation jobs — preserve the line, preserve the meaning. Marketing taglines, character dialogue, jokes, and brand voice are transcreation jobs, where the linguist rewrites in the target language to preserve effect, not lines. Treat them as different kinds of work, with different timelines and different briefs. Then validate the high-stakes surfaces with reviewers who actually live in the target market — not only with linguists who share your source culture. A Russian translator with excellent English can faithfully render a Queen joke into something a UK reader will hate. That isn't a translator failure. It's a review-pipeline failure.

The job is to build the review pipeline so the right cultural reader sees the right surfaces — not just the right linguist.

Trap 4: Visuals are localizable too — and "default" usually means "Western"

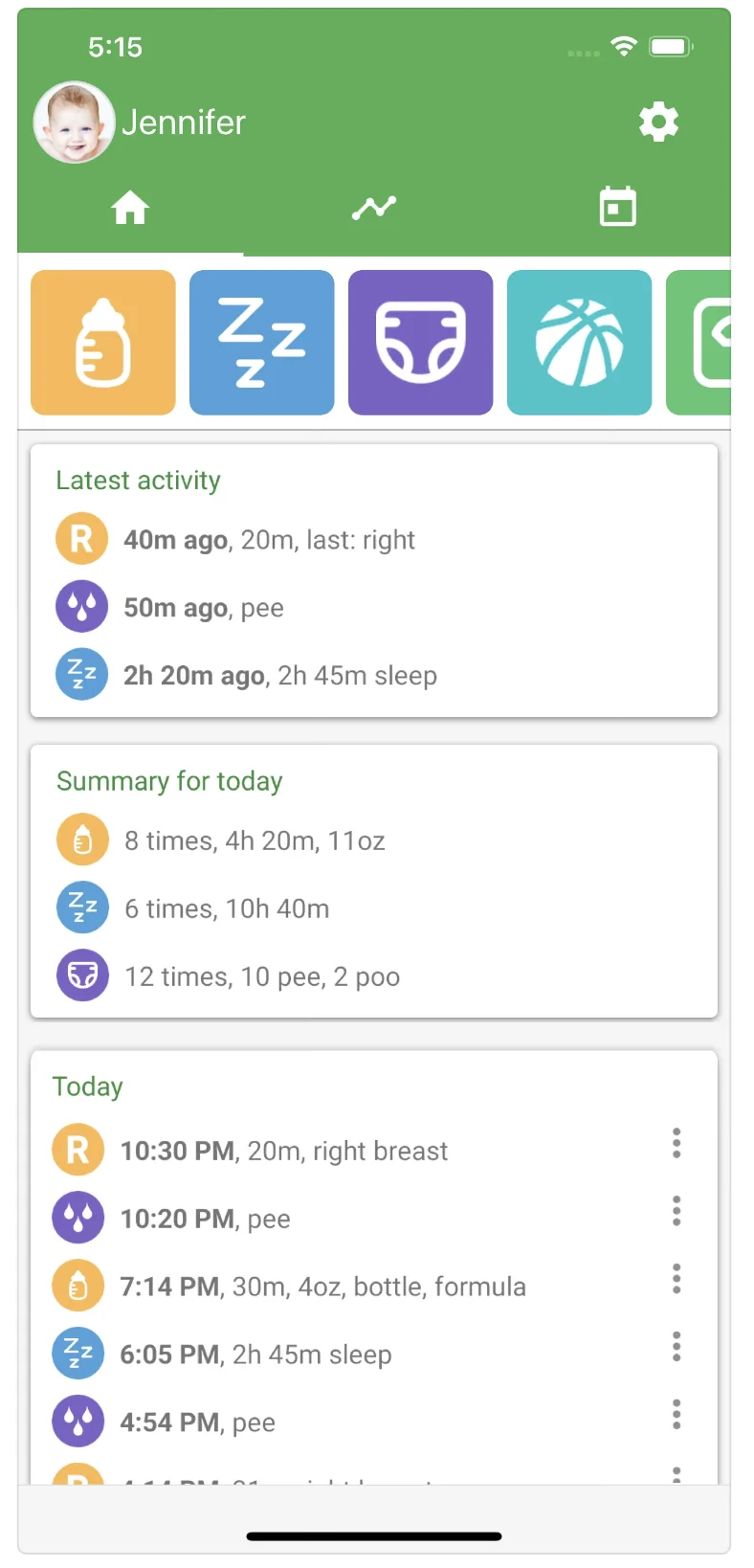

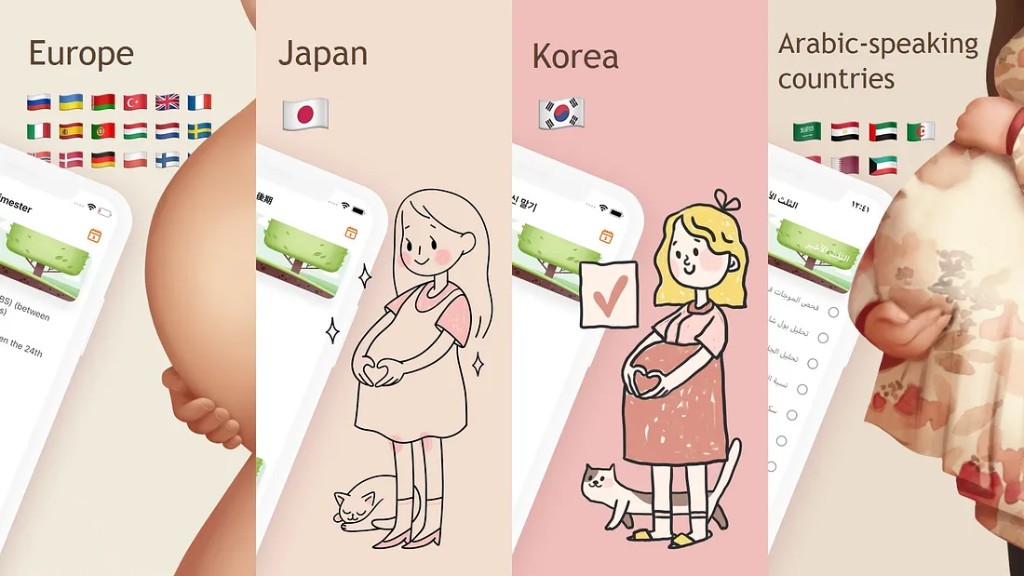

A pregnancy tracking app built in English ships with photography that feels neutral to its team: clean lines, muted palette, a calm clinical aesthetic. Localized into Japanese, the strings are accurate, the layout holds up, the dates format correctly. The app still feels wrong. Not broken — wrong. The visual language reads cold and clinical to a Japanese audience where the category convention runs softer, warmer, more kawaii. Same product, same content, same feature set. Different felt sense entirely, and the team can't name why their retention is off.

What's anime style for an Asian audience becomes very, very niche for a European one. A pregnancy tracking app localized from English into Japanese needs absolutely different imagery — more like cute. For every language, professional localization develops a style guide with those nuances.

Imagery carries cultural defaults the same way language does — and the defaults are even harder to see, because nobody on the source-culture team experiences their own visuals as culturally specific. They just look like the product. Color is the easiest example: white, green, and red carry meaningfully different associations across markets, and a palette that signals "premium" in one region can signal "funeral" or "warning" in another. Character art is another: an anime-adjacent illustration style that reads mainstream in Japan or Korea reads niche-otaku in much of Europe. Photography conventions, model selection, gesture, even the implied warmth of a UI illustration — all of it is making cultural claims your localized strings can't override.

The fix is the same shape as Trap 1's: build a per-locale style guide that captures visual register alongside linguistic register. What does category-appropriate imagery look like in this market? Which colors carry which associations? What level of stylization reads mainstream vs. niche? Then run a visual review on the same cadence as your LQA pass — ideally with the same in-market reviewers, since the same person who flags "this tone of voice is too casual for a B2B Japanese reader" is the person who'll flag "this hero image feels clinical."

One more surface worth scoping early: product names, mascots, and any made-up brand vocabulary. Run a cultural analysis before they harden in code and creative — there are a lot of names that sound clean in English and land weird, comic, or unfortunate in another language. Cheaper to find out at the brief stage than after the App Store icon ships.

What prevents the traps

You do not need a forty-page policy on day one. You do need a few artifacts that live alongside the strings, in places linguists and reviewers actually look:

- A one-page locale brief: persona, register, channel-by-channel tone, taboo topics, examples of what sounds on-brand and what doesn't.

- A per-locale visual style guide: reference imagery, palette guardrails, character-art conventions, and what "category-appropriate" looks like in that market.

- UI context in the TMS or repo: screenshots, comments, or design links so linguists know what each string is doing on screen.

- Early cultural review for product names, mascots, humor, and campaign hooks before they harden in code and creative.

- In-context LQA on builds for the locales that matter most — because grids hide tone and layout issues that users see on day one.

The through-line is simple: cultural knowledge beats literal fluency alone. Every one of these artifacts exists to put the right cultural reader in front of the right surface at the right time. Linguistic fluency without that pipeline produces correct copy that doesn't sound like the people you're trying to reach. Build the pipeline once so that most of the traps stop being traps.